CRUXEval#

Note

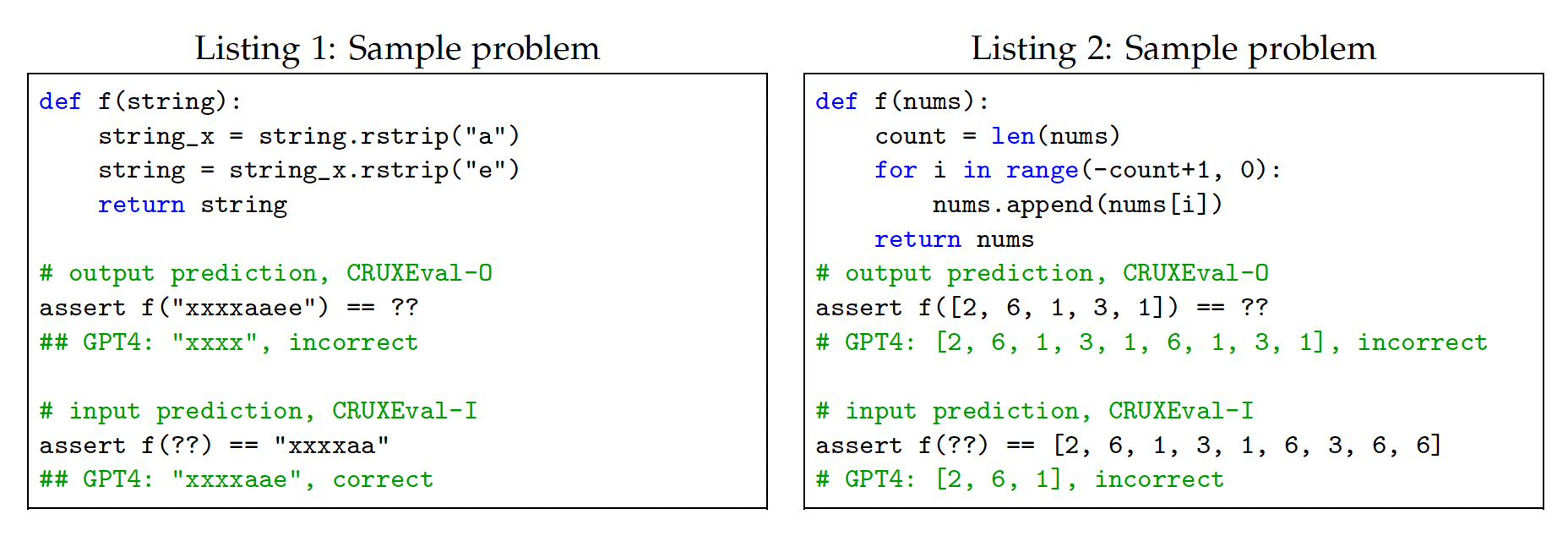

CRUXEval[GRL+24] (Code Reasoning, Understanding, and eXecution Evaluation) is a benchmark consisting of 800 Python functions and input-output pairs. The benchmark consists of two tasks, CRUXEval-I (input prediction) and CRUXEval-O (output prediction).

The benchmark was constructed as follows: first, we use Code Llama 34B to generate a large set of functions and inputs. The outputs are generated by executing the functions on the inputs. Second, we filter the set so that our benchmark only consists of short problems with low computation and memory requirements, problems which a good human programmer should be able to do without extra memory in a minute or so. Third, we randomly select 800 samples passing the filter, ensuring the benchmark is both small enough to easily run but large enough to reliably see performance differences among various models.